-

Fabric Data Wrangling for Testing

Note: Currently I am using this button so you the reader know the level of AI used in my articles. For more information – go here: What’s with the AI button?

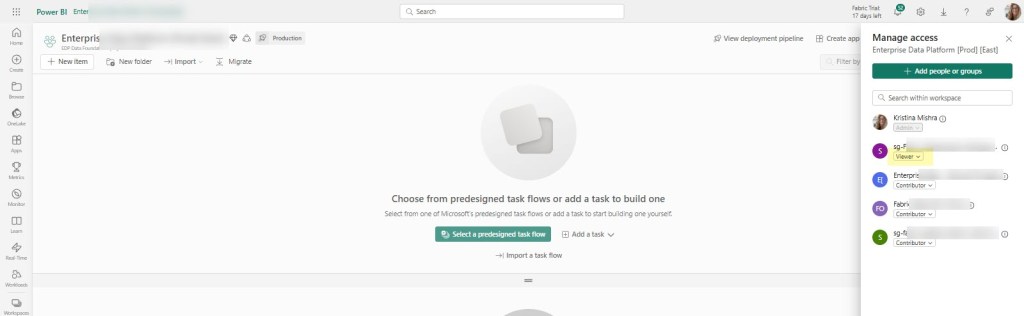

Data Wrangler has been available for awhile now, but I’ll be honest, it’s not something we’ve been actively using. We’ve been heads down on time-sensitive projects for over a year and needless to say, our cup runneth over. Recently we’ve had a bit of respite and I decided to see how we could use Data Wrangler within the context of our current Microsoft Fabric data warehouse (i.e. medallion layer lakehouses).

Data Wrangler has a lot of cool features that will give you code snippets for what you want to do, but I wanted to use it a different way. I wanted to have an easy way to do a quick check for dimension tables. I also wanted an easy-peasy way for others, some of whom are not developers, to be able to do quick sanity check of the data.

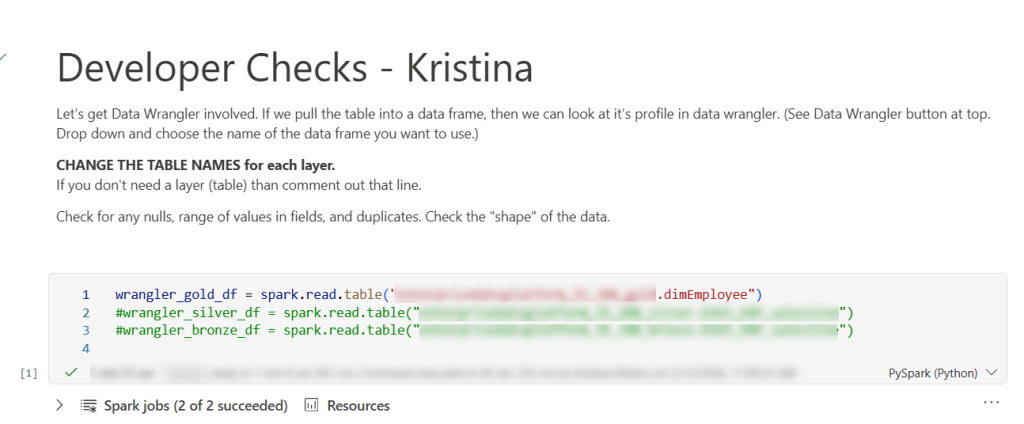

Enter the “Developer check notebook”. This notebook may have the smallest amount of code you’ve ever used. (Though you can certainly make it larger and add on to it.) There are more characters in the explanation section (because I make a copy of this notebook for each person – some who may not be coders), then actual code. The purpose of this notebook is really just to get the dimension table into Data Wrangler, so I just pop it in a data frame (sometimes several tables so I easily test related tables.)

Basically, you could just have 1 line in this notebook and that would be all you need.

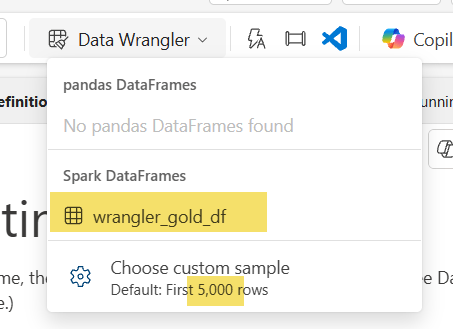

wrangler_gold_df = spark.read.table('lakehouseName.tableName')Once you have run that cell, you can enter DATA WRANGLER WORLD. Yay! All you need is to click that little button at the top and select your data frame. Special note: the default for data wrangler is the first 5,000 rows, but you can change that. For example, this particular table had a little over 15K rows so I changed it to 16000. I wouldn’t do this for something really big (dimension or fact), but for 15Kish rows, it still worked as normal on an F32 capacity.

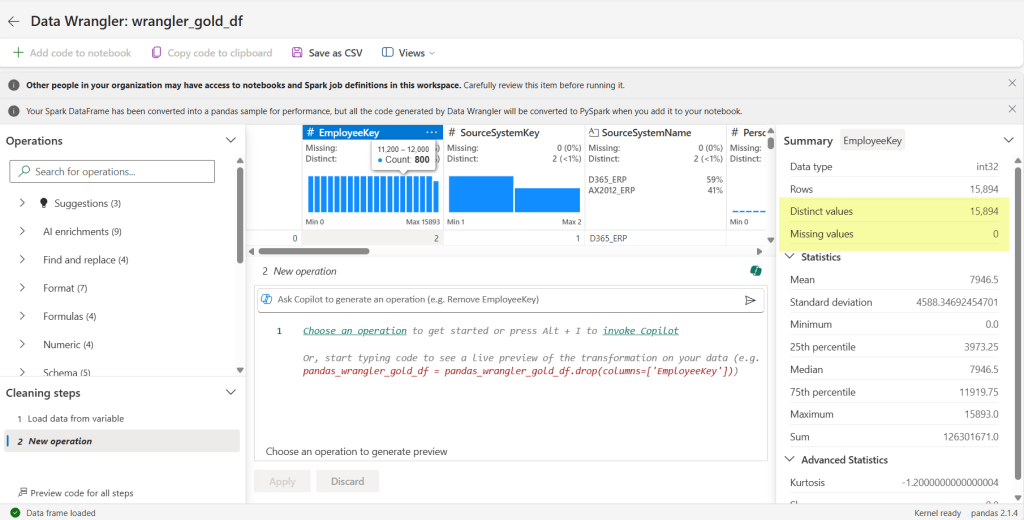

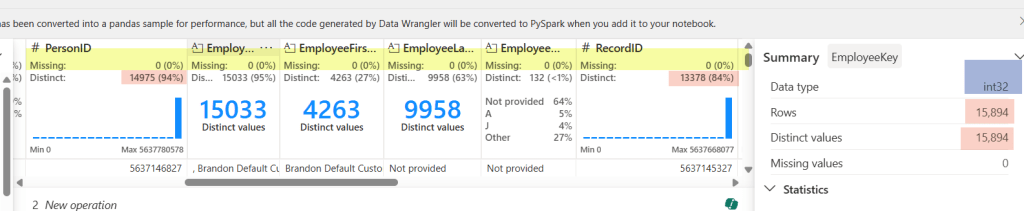

Now we are taken to a completely different area. Since a lot of our code is logic in a spark sql view, the ability to generate code in data wrangler is not what we are really using it for in this case. I’m using it to get the shape of my data and do some quick checks. The first check I’m going to do is make sure my primary key is unique. Microsoft Fabric with lakehouses doesn’t have an identity field, so you have to progmatically find a way to do it. That can be prone to error, so my first checks are with my surrogate key: is it distinct and are there any missing values. I click on the EmployeeKey column and voila! It says I have 15,894 rows, that’s the same number as my Distinct values, and I have no missing values.

We see are all clear. Awesome-sauce.

I can see from my third field (SourceSystemName) that it only contains 2 values: D365_ERP and AX2012_ERP – which is EXACTLY the only values it should contain. Double Yay!

Scrolling along to each field, I can discover more. For the field I have selected, I can verify the data type is correct (see int32 highlighted in blue for the EmployeeKey) – something we sometimes mess up by putting in a text default when we should be using a numerical default. I can also see as I scroll through the fields that there are no missing values in my columns (see highlighted yellow area).

The pink highlight represents something I need to check out. Normally our RecordID is unique (recid from D365 and AX2012), but in this case it does not match the distinct values (it doesn’t even match PersonID!). That’s because we have 2 source systems and since the recid is system-generated, there is always the possibility for overlap. But don’t worry, we can still check it easily here.

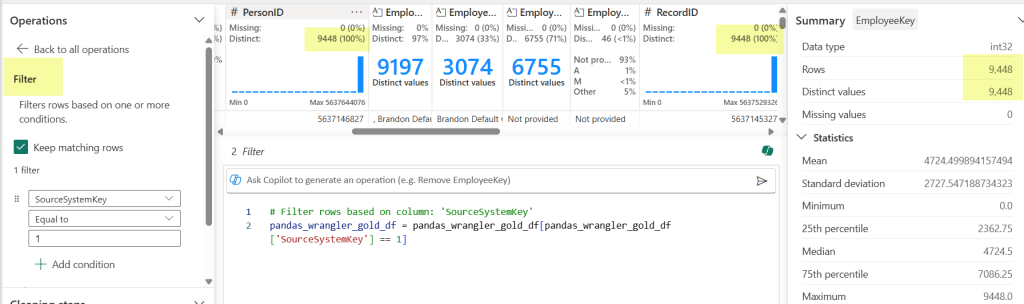

By choosing the filter Operation (under Sort and filter) on the left-hand side, I can check my counts against each source system to test my theory. Yay Again! They now match. Spoiler alert, the count matched when I changed the filter to my other source system too.

This also works in other ways. In our logic for creation/updates to this table, we had some code that didn’t account for SCD with an employees name in the source system. We don’t have a requirement to keep the history in our data warehouse, so this was making duplicates across fact tables. RecordID (aka recid) is the source system’s generated surrogate key, so when I saw that the RecordID did not match the PersonID count, I knew to go back to the code and see why (thankfully a really easy fix). By looking at some of these things first, we can hopefully avoid testers throwing bugs back to us.

Another thing you can see in data wrangler is the shape of your data. What the heck does “shape of your data” mean? It’s basically saying what it looks like and how it is distributed. Yea – maybe that still doesn’t clear things up. Let me show you:

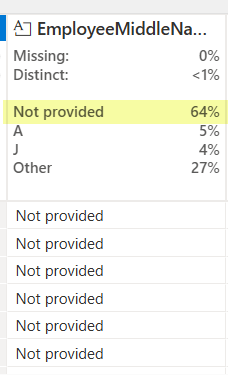

For most of our text fields in a dimension, we have a ‘Not provided’ default value. This let’s me know that 64% of our values are the default. While that may be totally expected in some columns (like EmployeeMiddleName), that’s going to send off alarm bells if I see ‘Not Provided’ ANYWHERE for my SourceSytemName field. That column should always have a valid source system name. In other columns maybe seeing the ‘Not provided’ is ok, but starts to get worrisome at some percentage. Different fields will have a different requirements, but sometime you will see things you didn’t even think of as a developer. (Mind you, maybe you SHOULD, but sometimes we don’t live in a perfect world and you can’t do every single check.)

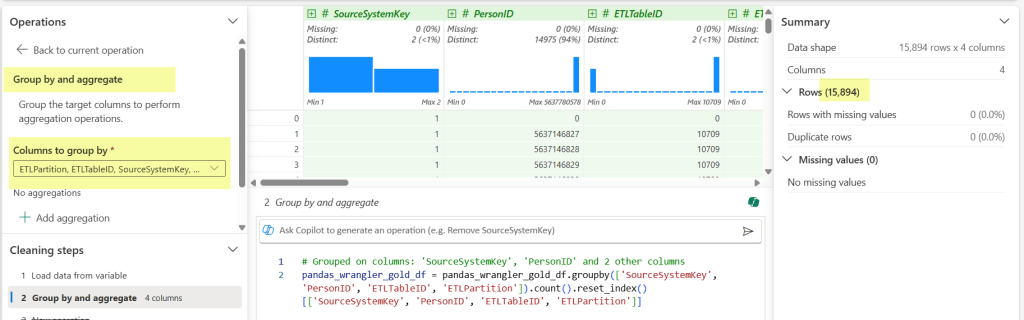

Last but not least, one of the big checks I do, is validate that the assumption of alternate key (which was probably used in a windows function or 2 … or 3), is correct. For this I use the Operations function Group by and aggregate.

Once I select Group by and aggregate, I then select my columns that are part of the alternate key I’ve used in my code. (Those buggers end up being important when you JOIN later.) We can see my row count meets my original one against the PK, which gives me all the warm and fuzzies. Added bonus that it generates the code block and then I can see all the fields easily that I selected in the drop down.

When I’m done, I don’t bother saving the generated items from data wrangler (though I could if I wanted to.) I just click the back arrow and return to my tiny notebook.

So rather than have to type a bunch of code to do these checks (or hand hack reusable code in multiple places), I just need the table name and one line of code in a notebook:

wrangler_gold_df = spark.read.table('myawesomegoldlakehouse.dimEmployee')That the essence of it. And it ends up saving me not only time, but visually seeing new use cases that may be particular to a field that I didn’t see before. Even better, I can copy the notebook for other people to use for their own testing. We have testers that don’t typically do anything in a notebook. If they don’t want to use other methods, then they have the option of going into their notebook and taking a peak under the covers – which is more time saved.

Do you have any different ways you use Data Wrangler? Share the knowledge for others in the comment section.

Happy Wrangling!

-

Synapse Link and Refreshing a Data Source

Note: I actually wrote 80% of this last year and then never published it. We were doing a D365 refresh this weekend and encountered the same problem, and I’m super glad I found this in my draft box. I decided to finish it and post for next time, because I’m bound to forget about it again.

Ah – you’ve finally got your Synapse Link set up and everything is trucking alone nicely. You’ve made it through Dev, QC, and Prod, and it’s been pretty hands off for a bit. Until, someone decides you need a refresh at your data source.

In all fairness, it’s usually a pretty legit request. So why the grumpy dog face under the covers? Synapse Link is a pain to set up if you are doing anything slightly different or following best practices. Breaking it is easy, and you just know you are going to have to burn time to fix stuff – it’s really just a matter of how much time.

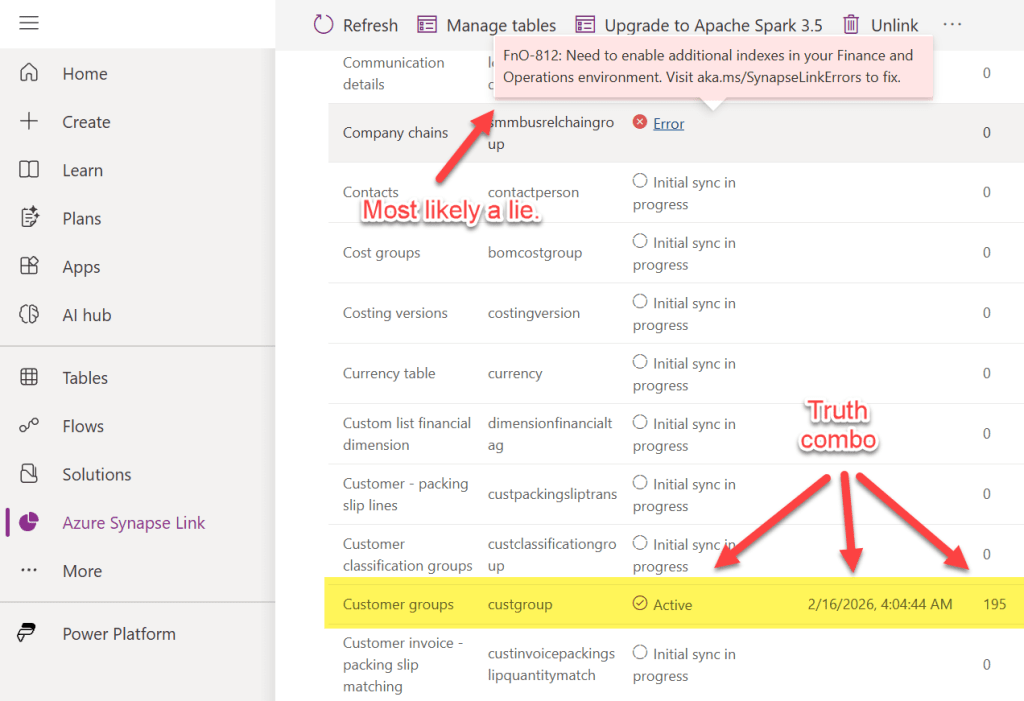

We recently had to refresh our D365 F&O Tier 2 environment for a pilot project, and I was dreading having to fix the 130 (out of 3689) tables in our link. But sadly, every last one of them was showing up with the error:

CT-809: Row version change tracking property disabled in Table. This table is paused. Visit aka.ms/SynapseLinkErrors to fix

No problem, just get your D365 peoples to do this: Use change tracking to synchronize data with external systems – but then you find out that the row version property is enabled on your tables and you are thinking…Now what???

First off, make sure you have a list of your tables saved off somewhere. We have ours in excel so we can add and remove columns for things like “In Synapse Link”, “bronze layer”, “silver layer”. It just makes it easier. One of these days you are going to not only see you NEED it, but you’ll be really thankful you did this extra step.

TIP: With Copilot now a thing, you may want to just grab the tables you have already listed in Synapse Link. That way you don’t miss any that may have been missed. Next, make sure you don’t have any tables that were updating in the middle of the data source refresh. Otherwise Synapse Link seems to hyper focus on that one and loops over and over in it’s inability to complete the last known task. Maybe it will eventually give up, but who really has time for that? (This morning I was looking at 0 movement after 16 hours if that gives you any idea.) To speed up the process – you are going to want to do a little clean up.

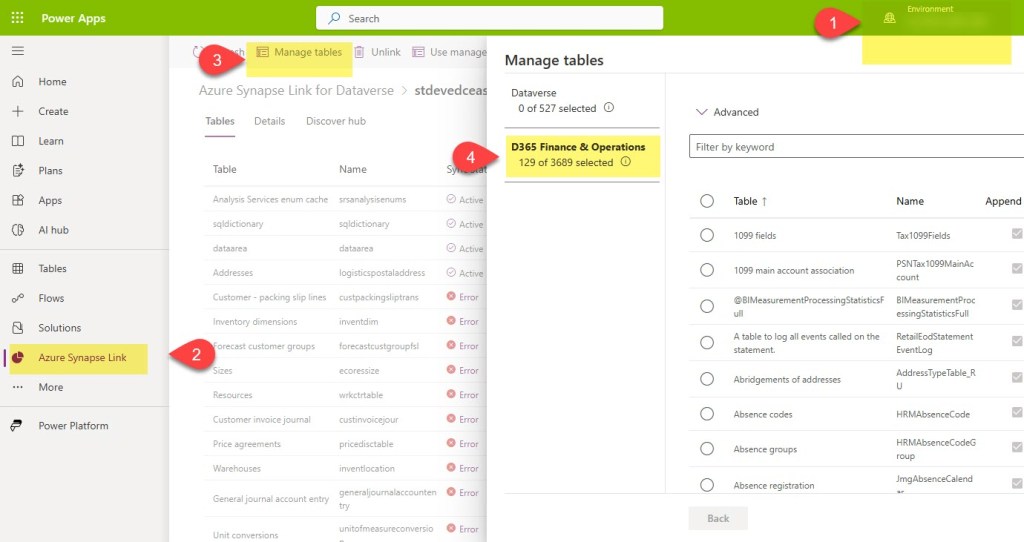

Go to make.powerapps.com and do the following:

- Select the enviroment you are trying to manage

- Click Azure Synapse Link and then click on your Synapse link

- Once your Synapse Link opens, you will have the option to click Manage Tables

- Select which data source group of tables you want to use. In the example below, I need my D365 F&O tables.

Then, deselect the offending table(s) and click the save button at the bottom. Once you see it has been removed from your main table list, go back in your data source (#4 in the image) and add it back. Click save. Again – removing the road blocker by itself may save you some headaches down the road. Once the road blocker has been resolved, it should show up as Active.

If you don’t know which one is the road blocker, then go ahead and remove them all. You will have to do that anyway, so maybe even just start off with that. Your choice.

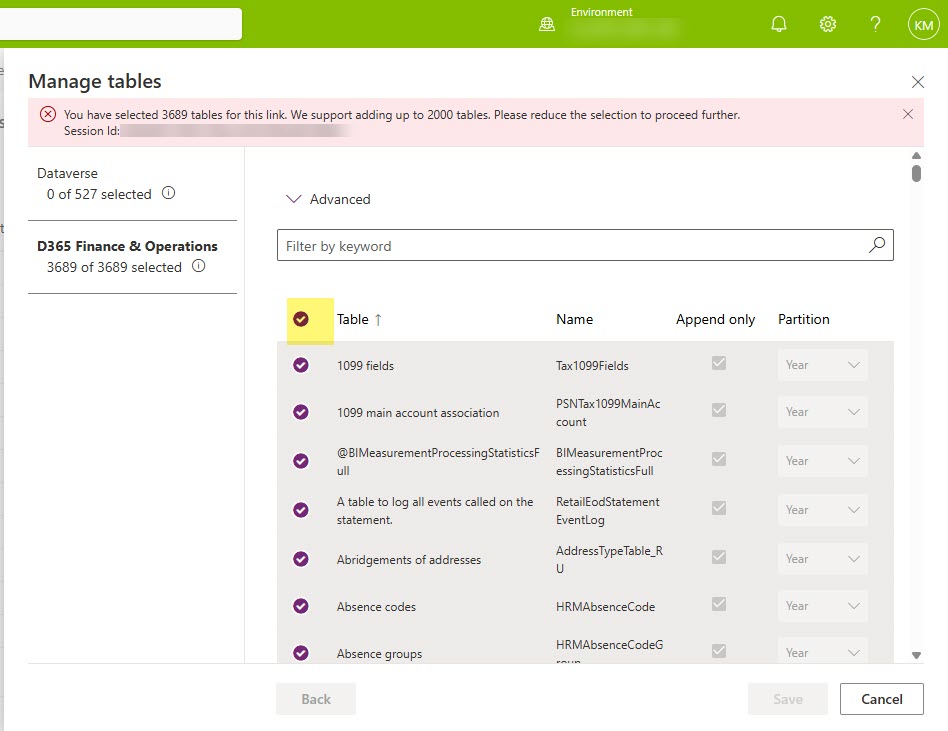

TIP: If you have hundreds or thousands of tables, you have my deepest sympathies. I’ve tried several methods of quick fixes, many that have brought my hopes up, but none that have succeeded to do anything except waste my time. The good news is you can add many tables at once separated by commas. I tend to add mine in batches of somewhere between 38-60. To remove them all, let’s go back into Manage Tables, and click the little circle that selects all your tables at once.

You will imediately notice an error message at the top that says something along the lines of “You’ve selected all the tables – WTAH. Fabric Link was made for you” or something like that. No worries that the save button is probably greyed out, you didn’t want to save any way. But you did want to be able to deselect all the tables right afterwards, and this was the easiest way to do it.

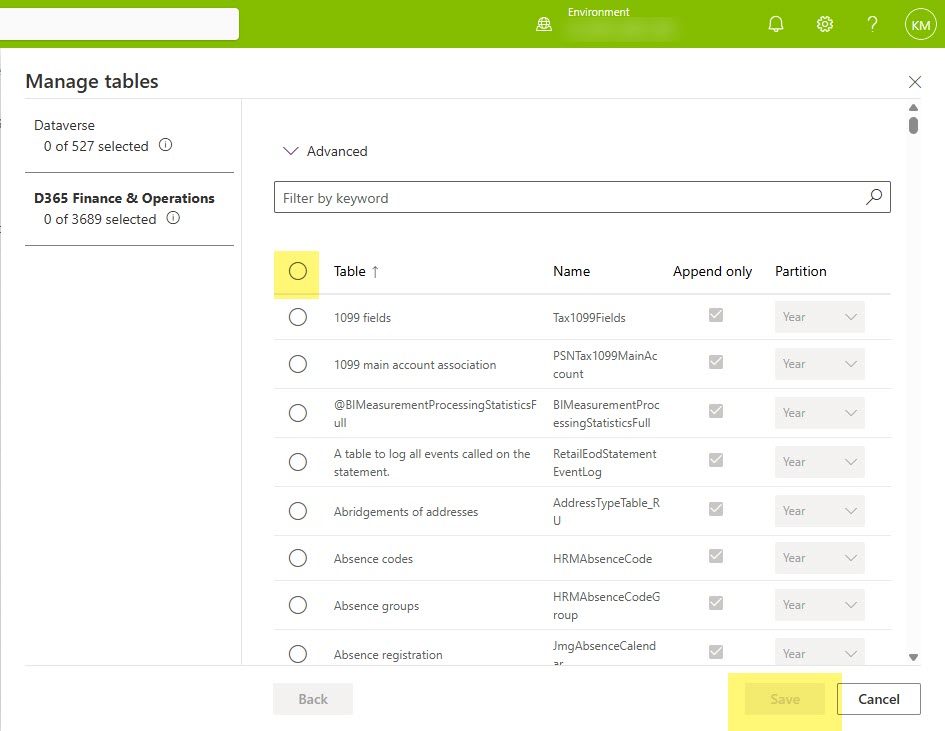

After deselecting all the tables, you may notice that you still don’t have the ability to click the Save button. This is probably Microsoft’s way of protecting you from really messing things up, so don’t fret. Instead, go to your list of tables that you (hopefully) have saved off somewhere, and add back 1. Preferably a tiny little fella. CustGroup is a fav of mine, but you do you and add 1 tiny table, and click save. And then wait…

The first table usually takes longer than you would think, so don’t let that scare you. Our custgroup table has about 200 rows and took about an hour with a small sized spark pool. I think it’s taken that long with 10 rows as well, it’s just the curse of being the first table through. You spark pool configuration probably makes the most difference. And if you’ve set that bad boy to small sized, then now may be the time to bump it up (scale) for it a bit. At least until all your tables have cleared.

When you tables first go to Active, you may get super excited and then see your dreams pass away with a sudden error about indexes. Don’t worry: that’s Synapse Link’s way of saying “hold up, I’m still doing stuff”. That error should resolve on it’s own. Probably in just a few minutes, but might be an hour or two. There’s too many factors that can change the timing of it, but I can safely say I see that error message all the times and it is always resolved.

Once you get your first “Active” that has passed the index error message and now is giving you a timestamp and a row count, you know you are on your way. You’ll see each table go through the “Initial sync in progress, to Active, to Error (Fno 812), and then back to Active. That means you are almost done. Just like this post. That has been sitting in my draft box, thankfully, for probably 6-9 months. But that’s the good thing about technologies that are no longer the new and shiney toy – the process to fix the problem will probably stay the same. Until you are pushed to something else.

Note: Full loads tend to take a bit. Once you’ve gotten a table or 2 in the stable Active status, I’d let it run through the night. Usually by morning my tables are in place.

Are you still using Synapse Link like we are? I’m curious to hear if your reasons are the same as ours. Drop a message in the comments if you don’t mind sharing!

-

Fabric Rest APIs – A Real World Example with FUAM

In our world of AI generated material, I wanted to be clear that content posted by me has been written by me. In some instances AI may be used, but will be explicitly called out either in these article notes (e.g. if used to help clean up formatting, wording etc..) or directly in the article because it is relevant to what I am referring to (e.g. “Fabric CoPilot explains the expression as…”). Since articles are written by me and come from my experiences, you may encounter typos or such since I am ADHD and rarely have the time to write something all at once.

Recently a colleague of mine was inquiring about creating a service principal to use with a Microsoft Fabric Rest APIs proof of concept project we were wanting him to develop for some governance and automation. Since he was still in the research phase, I told him we already had one he could use and did a brief demo on how we use it with FUAM (Fabric Unified Admin Monitoring tool). It occurred to me that others may find this a useful way to learn how to use Fabric or PBI Rest APIs. If you are also fairly new to using pipelines and notebooks in Fabric, then you can get the added bonus of learning through an already created, well-designed and active live Fabric project in your own enviroment. If you do not have FUAM installed in a Fabric capacity, or do not have permissions to see the items in the FUAM workspace, or have no intention/ability do change either of those blockers, then you can stop reading here. Unless you are just generally curious – then feel free to read-on. Or not. You do what works for you.

Incidentally, if you haven’t implemented FUAM and are actively using Microsoft Fabric, I highly recommend it. There is a lot of great information about your environment that is all in one place, and has great potential for you to create add-ons. You don’t even need a heckuva lot of experience to implement it, and once you get the core part up and running, it’s pretty solid (with regular updates that are optional).

How FUAM Uses Fabric Rest API Calls

The FUAM workspace/project uses Fabric/PBI API calls (in part) to collect various information about your Fabric environment. It uses other things too like the Fabric Capacity Metrics app, but for brevity we will only cover the REST API stuff here. FUAM stores information in it’s FUAM_Lakehouse located in the FUAM workspace. The lakehouse includes info on workspaces, capacities, activities, and a ton of other information about things that go on in Fabric.

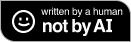

To see what is collected for FUAM from API calls, you need to first look at some of the pipelines. Go to your FUAM works and filter for Pipelines.

Image 1: FUAM Workspace with pipeline filter applied. Yes, the image above shows the PBI view but it is same-sies for the Fabric view. Or close to it. You probably won’t have the tag next to the Load_FUAM_Data_E2E pipeline like I do, but that’s because I implemented a tag for that one myself. It’s the main orchestration pipeline that I want to monitor and access separately. Plus it’s the main one you access on the rare occasion you need to access any of them and I’m a visual person. All this to get to the point: that’s NOT the pipeline we want to use here.

A quick note on why you may not want to start from scratch for a project that uses Fabric REST API calls if you already have FUAM and all needed access to FUAM object.

- You get a real world example that you can add on to if the information you need isn’t already in the lakehouse.

- You don’t have to go through setting up a new service principal / enterprise app in Azure.

- You don’t risk doing duplicate calls of the exact same information in different places.

- Depending on what you are doing with the REST API and what capacity size you are on, calls can really raise your CUs.

- You may get a tap on the shoulder from the security team if they see too many tenant info API calls.

- There is a 500 request per 100 workspace Fabric REST API limit. You may think that there is no way you will hit that, but when I first set up FUAM , I definitely hit it a few times as I was tweaking the job runs.

So how FUAM use the REST API calls? That depends on what you are doing and how you are accessing it, but for the purposes of this post, we are going to review how it uses it inside pipelines (the first path in the image below).

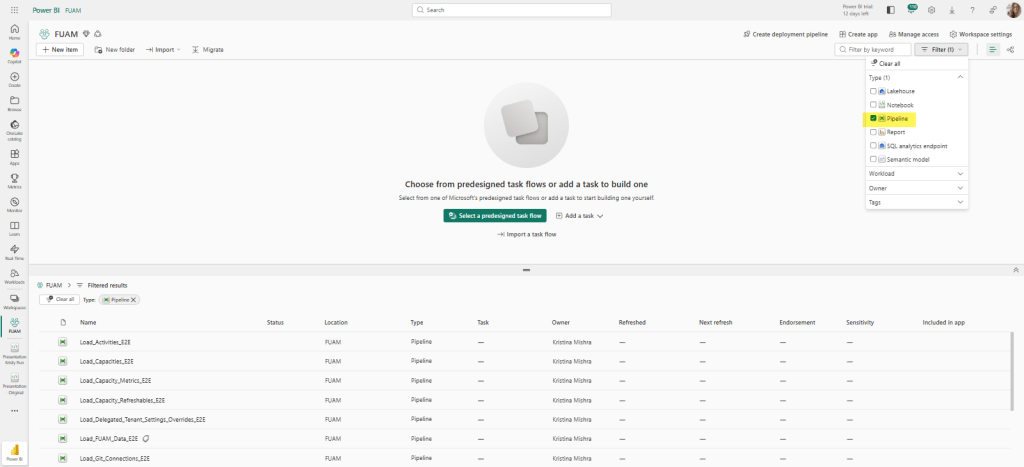

For our first example, let’s take a look at the pipeline: Load_Capacityies_E2E. If you look at the Copy data activity, you will see where the Source uses a data connection that was previously set up (in this case, the data connection uses a service principal to connect).

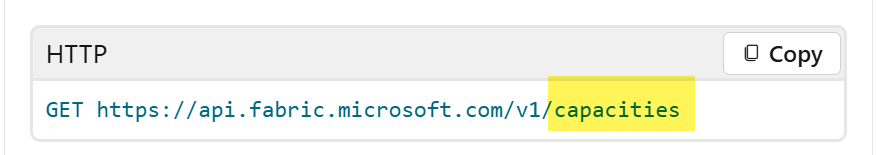

Image 3: Where the API magic happens But it’s the Relative URL and the Request method that is doing the heavy lifting here. This is where the API call is occurring. And if you want more information on how this is automagically happening, then click on the General tab and you will see a handy dandy URL provided in the Description section.

Image 4: Handy dandy link What is going on from Image 3, is really that Relative URL value doing the HTTP request: GET https://api.fabric.microsoft.com/v1/capacities

Image 5: image from the handy-dandy link page. This is where the magic really occurs because it makes the API call and plops the info into a json file into the FUAM_Lakehouse under Files–>raw–>capacity.

Image 6: :Location of capacity.json file in lakehouse. Looking back at Image 3 – the pipeline component – we see there is a notebook. The notebook listed there (01_Transfer_Capacities_Unit) is really about pulling the data from the json file, cleaning it and adapting it to a medallion architecture that ultimately lands in the Table section of the lakehouse. (That’s the short description, you should pop open the notebook yourself to walk through how that is done. If you are new to notebooks and want a walk through of what each line of code does, then plop the code snippets into Co-Pilot. It does an excellent job of code walk-thoughs.

But the heavy lift to get the data is done in the Copy data task which stores the result of the API call in the json.

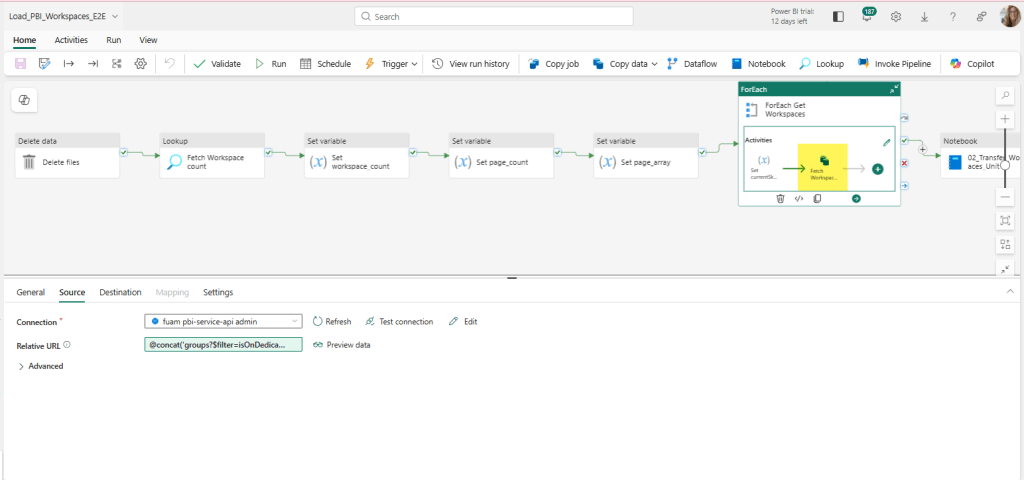

That was the simple straightforward example. Once you’ve mastered that rabbit hole, you can move onto a more advanced example in FUAM: Workspaces. Workspaces is slightly different. It still uses a copy data activity in its pipeline, but it’s a little hidden (unless you are looking at icons) in the Load_PBI_Workspaces_E2E pipeline.

Image 7: Workspace pipeline If we explode (aka – double click) that out from the pipeline, we can see the Copy data activity a little easier.

Image 8: Workspace Relative URL more complex. Now the Relative URL looks different. The Relative URL here uses a Pipeline expression builder. Here is the full expression:

@concat(‘groups?$filter=isOnDedicatedCapacity eq true&$top=’,variables(‘limit’),‘&$skip=’, variables(‘currentSkip’))

Fabric Copilot explains the expression as follows:

This expression builds a URL string for a REST API call to fetch a list of groups (workspaces) that are on dedicated capacity. It uses the concat function to join several parts together:

– ‘groups?$filter=isOnDedicatedCapacity eq true&$top=’: This is the start of the URL, specifying that only groups with isOnDedicatedCapacity set to true should be returned, and that the number of results should be limited by the $top parameter.

– variables(‘limit’): This inserts the value of the pipeline variable ‘limit’, which determines how many results to return per page (for example, 5000). – ‘&$skip=’: This adds the $skip parameter to the URL, which is used for pagination (to skip a certain number of results).

– variables(‘currentSkip’): This inserts the value of the pipeline variable ‘currentSkip’, which tells the API how many results to skip (for example, 0 for the first page, 5000 for the second page, etc.).

The final result is a URL like: ‘groups?$filter=isOnDedicatedCapacity eq true&$top=5000&$skip=0’ This URL can be used to fetch a specific page of workspaces from the API, with the number of results and the starting point controlled by the ‘limit’ and ‘currentSkip’ variables. This is useful for processing large numbers of workspaces in batches (pagination).All this to say it still calls the REST API with some added criteria, and then plops the result in a json in the FUAM_Lakehouse under the Files->raw->workspaces directory. The notebook 02_Transfer_Workspaces_Unit is similar to the capacity example, in that it pulls the data from the json file, cleans it and adapts it to a medallion architecture that ultimately lands in the Table section of the lakehouse.

Now What?

The possibilities of what you can do it pretty big. Take a look at the list of REST APIs available and suit to your needs (and permissions). Personally I’d be inclined to store it in the main FUAM lakehouse (with source control implemented of course), but I can see use cases that may put it in another workspace.

Besides using the FUAM workspace as a live example of working calls to REST APIs you can also extend your FUAM module to include more information from REST APIs that it may not already capture. It may end up being a great candidate as an add-on to your FUAM reports, or elsewhere if you want to limit security in your FUAM workspace. If you try any of this out, please share your experiences, creations, and this article so other can learn and grow as well. That’s what makes our community strong for the decades I’ve been lucky to be a part of it.

-

CFS: DEI Recorded Edition!

Introducing the CFS: DEI Recorded Edition for the (Virtual) KCSSUG.

When you run a user group, conference, etc.. you sometimes are faced with the issue of not getting enough diversity in your submissions. I think about this a lot and try to figure out levers I can pull to make changes, even if they are small. Time seems to be a big factor for many in underrepresented groups, and I’m certainly no stranger to that dilemma myself.

So I’m trying something new with my user group: a recorded option that isn’t bound by a specific date/time. The idea came after realizing that there are important voices that need to be heard in the community, but having availability that coincides with a static schedule can be sometimes difficult for underrepresented speakers. I want to introduce another way for all voices to be heard: the #KCSSUG YouTube channel. In addition to providing a space for speakers to be heard, it also provides a great benefit for our members to see more content from people they can either relate to and/or get new perspectives from. Diversity of perspective and experience brings more knowledge to others.

Speakers will submit their topics as normal (please feel free to submit multiple), which will go through a normal selection process. Here are the formats options available for sessions:

- Have a one-on-one talk with the organizer about a specific topic.

- Submit with multiple speakers to have a round table discussion with organizer.

- Record at home at a time convenient to you.

If you have an idea of another option, throw it in the notes section of your submission. You can submit any length, including lightning. Let’s go crazy and not restrict ourselves.

Well – actually – there is a restriction. Technically 2: it has to be approved and can’t contain sponsor content. Obviously we want to make sure that we serve quality content, but rarely have I seen that as an issue and I can give suggestions to get you polished if you are feeling you need it. Cough cough: New Stars of Data Speaker Improvement library. Oh oh! Speaking of which, I see a couple there I need to look/relook at myself.

This will only work if people know about it, so please share far and wide. In fact, after sharing, steal the idea for your own group. I won’t tell. I’d also LOVE to hear your ideas on other things I could be doing.

WHAT ABOUT YOUR REGULAR SCHEDULED SESSIONS?

No worries, we will still have those. Our call for speakers on our regular schedule will go out in April-ish. We currently have a Evening option (6PM CDT) and a lunch&learn option (12 PM CDT). We will be sending out additional information to see if the day of week works for everyone so stay tuned.

-

Synapse Link Setup: No drop down for Spark

Recently we started a new pilot project for Microsoft Fabric using D365 F&O (ERP) as the data source utilizing Synapse Link to get it out of Dataverse. If you are familiar with this architecture pattern, you know it can be pretty painful at times. Alas, Fabric Link will not work for us at this time, so I’ll just leave it at that for now. Just know that this problem is specific to a Synapse Link setup.

Previously, Spark 3.4 was not available to use for Synapse Link. That has creating a bit of a panic from people using D365 F&O with Synapse Link, because Spark 3.3 is going out of support on March 31, 2025. I don’t know what the cost of D365 F&O is for most, but I’m pretty sure it’s like a gazillion dollars. Recently I saw people were starting to use Spark 3.4 with D365 F&O and Synapse Link, but they were also having trouble.

Getting around some other issues we’ve been encountering, we were finally able to set up our Synapse Link. The setup screen confirmed we needed to use Spark 3.3.

UPDATE: The UI below now properly says Spark 3.4, but I’m leaving this here in case it happens with another version in the future.

Here’s a close up in case you can’t see it:

The problem was, after I filled out all the other required information, there was nothing in the drop down box for spark. I confirmed on the Azure side that everything was set up correctly and that Synapse and the storage account were seeing each other, but nothing in the drop box.

Now at this point I could drag this post out and tell you all the things I did to try and fix it, but I’m getting a little annoyed at unnecessarily long posts lately, so I just skip to the solution: Spark 3.4 is actually required now.

Once we recreated a spark 3.4 pool, all of a sudden it appeared in the drop down box and we could move to the next screen. Unfortunately right after we got that fixed we ran into a Spark 3.4 bug, but that was fixed and pushed out in about 2 days. Finally we can move on to the Fabric portion of our project.

Note: we did let Microsoft know about erroneous message for 3.3,

but as of yesterday it was still showing up when you go to set up a new Synapse Link.Showing up correctly when I checked again on Feb 6th. -

Testing is <redacted by HR>

Cool your jets. I’m not talking about Certification tests or the like. I’ll leave that idea to ponder on later. I’m talking about at an earlier level: elementary school. (Warning: Rant Coming.)

In the US, elementary, middle/junior-high, and high schools generally administer tests throughout the year “to understand their students’ needs and to personalize their teaching methods”. What they often don’t say is that they use it to place kids in advance classes. What’s that you say? That makes complete sense? While it may seem like it on a surface level, I encourage you to think about it a little deeper. Because it’s a circular loop that grants some kids benefits that result in more opportunities later in life, even though their initial ability was no greater.

Think about it. Most of the time you aren’t even told when these tests are coming. Maybe your kid didn’t get enough sleep one night, has an illness coming on, just got in a fight with their BFF, or whatever. BOOM! They take a test. A test that determines if they are able to get into some extended learning program or not. A line is drawn in the sand and those who meet that number or higher get the option to have advance learning taught to them.

The score that is often used is based on a percentile, sometimes from a previous year. Does the child’s score fall into the 95th percentile? So the next time the kids are tested, the kids that have had higher learning taught to them in class, are way more likely to be in that top percentile. Knowledge is cumulative, and there is a direct correlation to kids that are receiving this benefit to having a higher score during the next testing period.

Let’s give an example: Maybe your third grader isn’t taught fractions yet (I have no idea when fractions are taught, so maybe this isn’t an exactly accurate example.) But one day, Joe looks at his older siblings book and learns something about fractions. Ok – good job Joe and good job Joe’s sibling for not cleaning up. Joe’s able to answer that question on the achievement/growth test. (Incidentally, at this level the difference of 1-2 questions can really affect where you fall in the percentile depending on the question.) Joe’s put into the extended learning program (ELP).

But maybe Sally is an only child or may she had a bad day. She doesn’t get the fraction question, and thus is 5 points lower than Joe. She doesn’t get ELP. Her ability may be exactly the same as Joe’s, but she will not get the extra math that Joe is receiving by being in ELP. A few months go by, and now Joe has learned a ton more in ELP (as well as the other children there) and they all are in a higher percentile (because they’ve formally learned more) than children not given that opportunity. By middle school, they are put in more advanced math classes and thus the cycle continues.

Let’s further complicate this by adding gender. As a mom of twins, I can tell you the social structure of girls is WAAAAAYYYY different than boys. And maybe not all girls follow it, but geez louise, some of the stories I could tell you starting over 25 years ago are startling. Girl culture can be super complicated and intense. I mean seriously – 2nd graders in full blown psychological warfare against each other for MONTHS.

I once had a 7 year old girl call another 7 year old girl from MY HOUSE, unbeknownst to me, to tell her not to come to my son’s birthday party. She told the other girl that my son didn’t want her there. My son had no idea that the call even occurred. WHY did said caller do it? Because she had a crush on my son. That was not a fun conversation to have with the callee’s mom BTW.

All this to say, I’m willing to bet that the stress that comes with the complicated lives of young girls, may also, occasionally, result in just an ever so slightly lower score on the one test that changes everything when all other things are equal. And I haven’t gone down the rabbit hole yet, but I’ll bet there are other things besides stress with girls that can have an adverse affect. And that’s just the tip of the iceberg. The same concept could apply to many kids: neurodiverse, POC, stressful conditions at home, etc.. An entire group of kids, with the complete capability to be able to learn the higher level math, are not given the opportunity.

What’s that you say? Teach it to them yourself? Yep – great idea and we do it that in our family, but not every family has that option. Single parents, lower educated, overworked, sick, caregivers, etc.. all may have limitations on being able to do additional at-home education. Even free options for students like Khan Academy (which I love BTW), may not even be available or known to many parents.

I don’t know the answer, but as someone who is personally having to deal with an unresponsive school system about a highly advanced young lady that is fully capable and willing, I’m mad. I’m mad that the boy in the family is being given the chances from the school, while the girl is not. And with everything being the same for both kids learning at home, he’s been advancing more because he gets to have extra time at school with more advanced topics. All because of a few points on 1 test many years ago.

Have I made you mad? Good. Now what are we going to do about it? For all those kids missing out.

-

I got 99 problems and Fabric Shortcuts on a P1 is one of them

If you’ve bought a P1 reserved capacity, you may have been told “No worries – it’s the same as an F64!” (Or really, this is probably the case for any P to F sku conversion.) Just as you suspected – that’s not entirely accurate. And if you are trying to create Fabric shortcuts on a storage account that uses a virtual network or IP filtering – it’s not going to work.

The problem seems to lie in the fact that P1 is not really an Azure resource in the same way an F sku is. So when you go to create your shortcut following all the recommend settings (more on that in a minute), you’ll wind up with some random authentication message like the one below “Unable to load. Error 403 – This request is not authorized to perform this operation”:

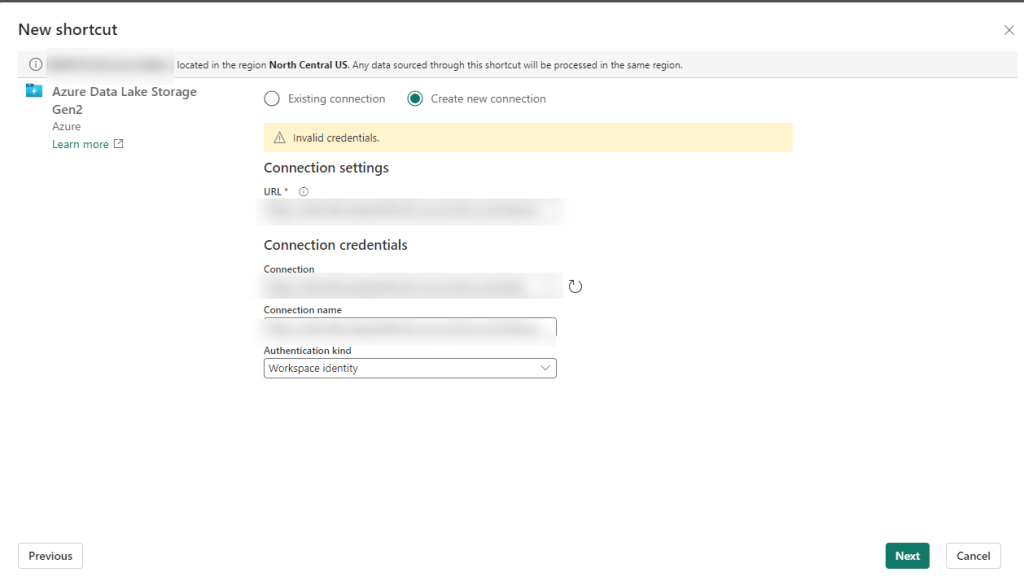

You may not even get that far and just have some highly specific error message like “Invalid Credentials”:

Giving the benefit of the doubt – you may be thinking there was user error. There are a gazillion settings, maybe we missed one. Maybe, something has been updated in the last month, week, minute… Fair enough – let’s go and check all of those.

Building Fabric shortcuts, means you are building OneLake shortcuts. So naturally I first found the Microsoft Fabric Update Blog announcement that pertained to this problem: Introducing Trusted Workspace Access for OneLake Shortcuts. That walks through this EXACT functionality, so I recreated everything from scratch and voila! Except no “voila” and still no shortcuts.

Okay, well – no worries, there’s another link at the bottom of the update blog: Trusted workspace access. Surely with this official and up-to-date documentation, we can get the shortcuts up and running.

Immediately we have a pause moment with the wording “can only be used in F SKU capacities”. It mentions it’s not supported in trial capacities (and I can confirm this is true), but we were told that a P1 was functionally the same as an F64 so we should be good right?

Further down the article, there is a mention of creating a resource instance rule. If this is your first time setting all of this up, you don’t even need this option, but it may be useful if you don’t want to add the Exception “Allow Azure services on the trusted services list to access this storage account.” to the networking section of your storage account. But this certainly won’t fix your current problem. Still, good to go through all this documentation and make sure you have everything set up properly.

One additional callout I’d like to make is the Restrictions and Considerations part of the documentation. It mentions: Only organizational account or service principal must be used for authentication to storage accounts for trusted workspace access. Lots of Microsoft support people pointed to this as our problem, and I had to show them not only was it not our problem, but it wasn’t even correct. It’s actually a fairly confusing statement because the a big part of this article is setting up the workspace identity, and then that line reads like you can’t use workspace identity to authenticate. I’m happy to report using the workspace identity worked fine for us once we got our “fix” in (I use that term loosely) and without the fix we still had a problem if we tried to use the other options available for authentication (including organizational account).

After some more digging, on the Microsoft Fabric features page, we see that P SKUs are actually not the same as F SKU in some really important ways. And using shortcuts to an Azure Storage Account that are set using anything but to Public network access: Enabled from all networks (which BTW – is against Microsoft best practice recommendations) is not going to work on a P1.

The Solution

You are not going to like this. You have 2 options. The first one is the easiest, but in my experience very few enterprise companies will want to do this since it goes against Microsoft’s own best practice recommendation: Change your storage account Network setting to: Public network access enabled from all networks.

Don’t like that option? You’re probably not going to like #2 either. Particularly if you have a long time left on your P SKU capacity. The solution is to spin up a F SKU. In addition to your P SKU. And as of the writing of this article, you can not convert a P SKU to an F SKU, meaning if you got that reserved capacity earlier this year – you are out of luck.

In our case, we have a deadline for moving our on-prem ERP solution to D365 F&O (F&SCM) and that deadline includes moving our data warehouse in parallel. Very small window for moving everything and making sure the business can still run on a new ERP system with a completely new data warehouse infrastructure.

We’d have to spend a minimum of double what we are paying now, 10K a month instead of 5k a month, and that’s only if we bought a reserved F64 capacity. If we wanted to do a pay-as-go, that 8K+ more a month, which we’d probably need to do until we figure out if we should do 1 capacity, or multiple (potentially smaller) capacities to separate prod/non-prod/reporting environments. We are now talking in the range of over 40K additional at a minimum just to use the shortcut feature, not to mention we currently only use a tiny fraction of our P1 capacity. I can’t even imagine for companies that purchased a 3-year P capacity recently. (According to MS, you could have bought that up until June 30 of this year.)

Ultimately many companies and Data Engineers in the same position will need to decide if they do their development in Fabric, Synapse, or something else all together. Or maybe, just maybe, Microsoft can figure out how to convert that P1 to an F64. Like STAT.

-

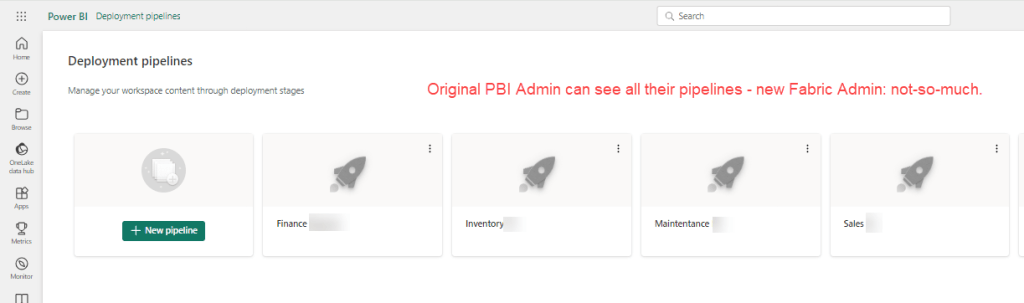

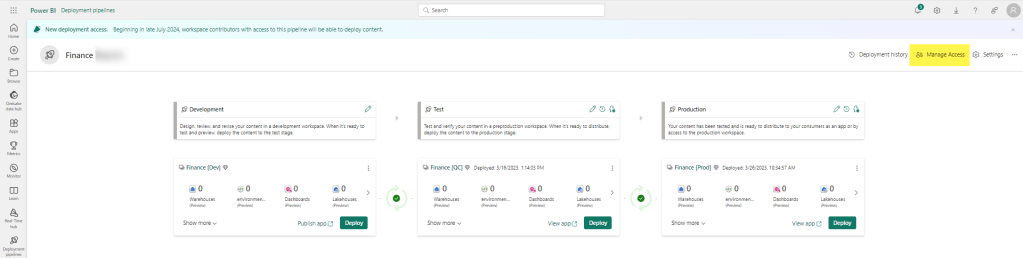

Why Can’t My Fabric Admin see a Deployment Pipeline?

You’ve assigned your Fabric Administrators and you’ve sent them off to the races to go see and do all the things. Except they can’t see and do all the things. OR CAN THEY? <cue ominous music>

Mango, crazy-eyed with anticipation about a new adventure. At first glance, Fabric Administrator #2 can’t see any of the workspaces PBI Administrator 1 created; some of them years ago. Let’s go ahead and fix that first over here.* Once you’ve gotten that all straightened out and they can see all the workspaces, you think you are in the clear for deployment pipelines? Nope, same issue: PBI Administrator #1 can see all of the deployment pipelines and newly minted Fabric Administrator #2 can see none. Waaa-waaaa (sad trombone).

*(If you only need the user / user group to see the workspaces relative to the pipeline, then read on for a helpful hint that performs the double duty of adding the security to workspaces and deployment pipelines at the same time).

To be fair, I’m fairly certain this would be the same case for 2 PBI Administrators, but since the Fabric genie has been let out of the bottle, I can’t say for sure.

What’s an admin to do??? I mean seriously, what does Admin even mean anymore?!?

Well if we are perfectly honest, there is a reason we’ve been telling you to set up User Groups. Because if the admin that had set up the pipeline to begin with had given access to the deployment pipeline to a admin user group to begin with, then we wouldn’t be here.

Photo by cottonbro studio on Pexels.com (Oh yea, well if you want to be that way then I say security should really be a part of the creating a pipeline option.) Look, do you want to play the blame game or do you want to find a solution? That’s what I thought.

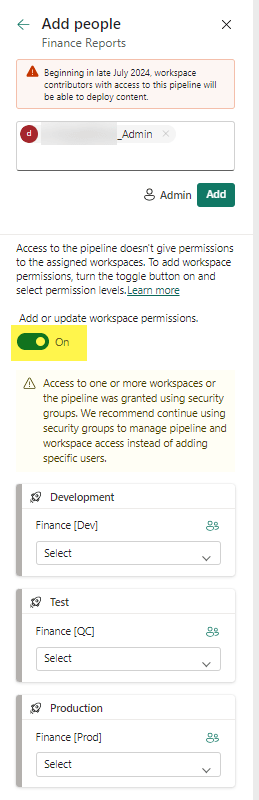

To fix, go into the deployment pipeline and click on the Manage Access link.

Then add your USER GROUP to the Access list Admin rights.

If you haven’t already added the group to the workspace – then here is your chance to do it all together. Just switch the toggle button to Add or update workspace permissions to ON.

You can then set a more granular access to each workspace for the user group (or user, sigh) in question. Access options include Admin, Contributor, Member, and Viewer (though we may see more down the road).

That’s it. Throw a message in the comments if you’ve encounter any similar hiccups.

-

User Can’t Create a Workspace in Fabric

Recently my boss reached out to me with an interesting question: How can she create a workspace in Fabric’s Data Engineering section? When she clicked on create a workspace, and then the Advance tab, her License mode options were restricted to Pro or Premium per-user. She didn’t have any of the Fabric options.

Our company is still under a Premium Capacity subscription which we will roll into a Fabric one once it completes, but according to Microsoft, our P1 Premium Capacity license is the same as a F64 license. In the Admin portal under Tenant setting, we have Microsoft Fabric options and even have the option Users can create Fabric items enabled. So what gives?

It turns out that in certain scenarios, you will need to also set this in the Capacity setting. In our case, we are keeping things pretty tight until we have our standards set up and will role things out to small groups. But to allow small groups to have access to this, you can add them to the contributor role under capacity setting. (I mean you could add people one by one, or enable for the whole org – you do you – but I’d advise against it. It’s hard to put the genie back into the bottle.) You could also add them to the admin role in the capacity setting, but again – I’d advise against it. These settings are ever changing and it’s hard enough keeping track of everything everywhere.

Yes you can add AD/Entra groups instead of users, and that’s really the route you want to go if you are dealing with anything large scale. I’m reminded of the Wizard of OZ when he says “ignore the man behand the curtain!” as my name is clearly listed in the image instead of a group, but that’s because I wanted to show a real world example.

Once you have added a user/group, click Apply. It took about 5-15 seconds for it to work it’s way through our system. Once that was complete, my boss had the Premium Capacity license available (which would allow her to create non-PBI Fabric items.)

What are some non-intuitive things you’ve found getting your company up and running on Fabric?

Skip to content